Physical models of rigid bodies are used for sound synthesis in applications from virtual environments to music production. Traditional methods such as modal synthesis often rely on computationally expensive numerical solvers, while recent deep learning approaches are limited by post-processing of their results. In this work we present a novel end-to-end framework for training a deep neural network to generate modal resonators for a given 2D shape and material, using a bank of differentiable IIR filters. We demonstrate our method on a dataset of synthetic objects, but train our model using an audio-domain objective, paving the way for physically-informed synthesisers to be learned directly from recordings of real-world objects.

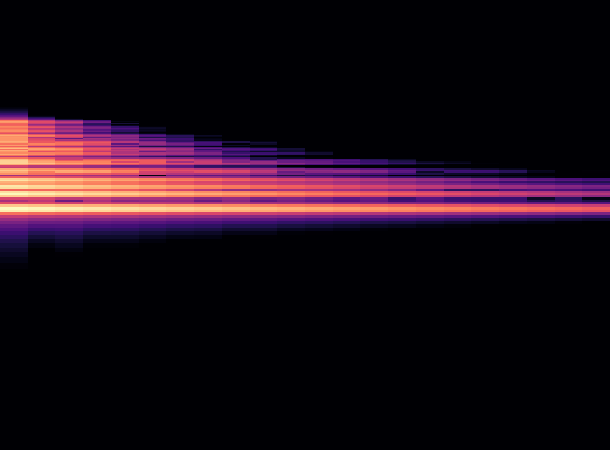

Compare the original audio and the generated audio.

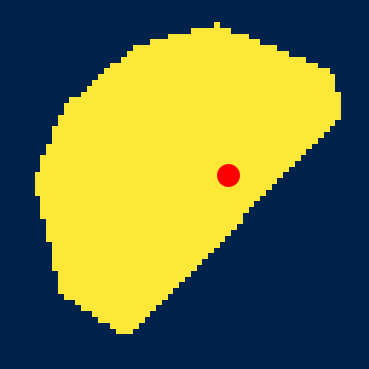

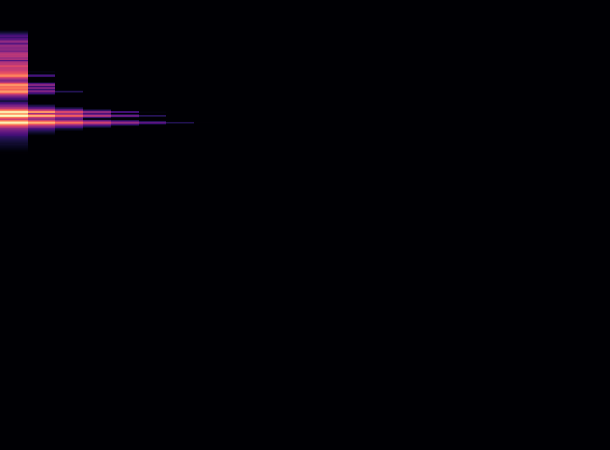

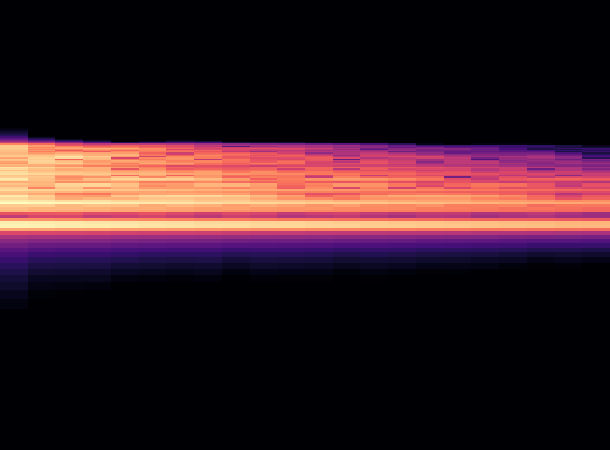

Interpolate between two shapes, and see how the audio changes.

In this case, we do not have discretized positions for the coordinates in the shapes (as FEM requires discretization). Because our network acts as a neural field we can obtain sound for continuous coordinate values. We can interpolate between the two shapes and see how the audio changes.